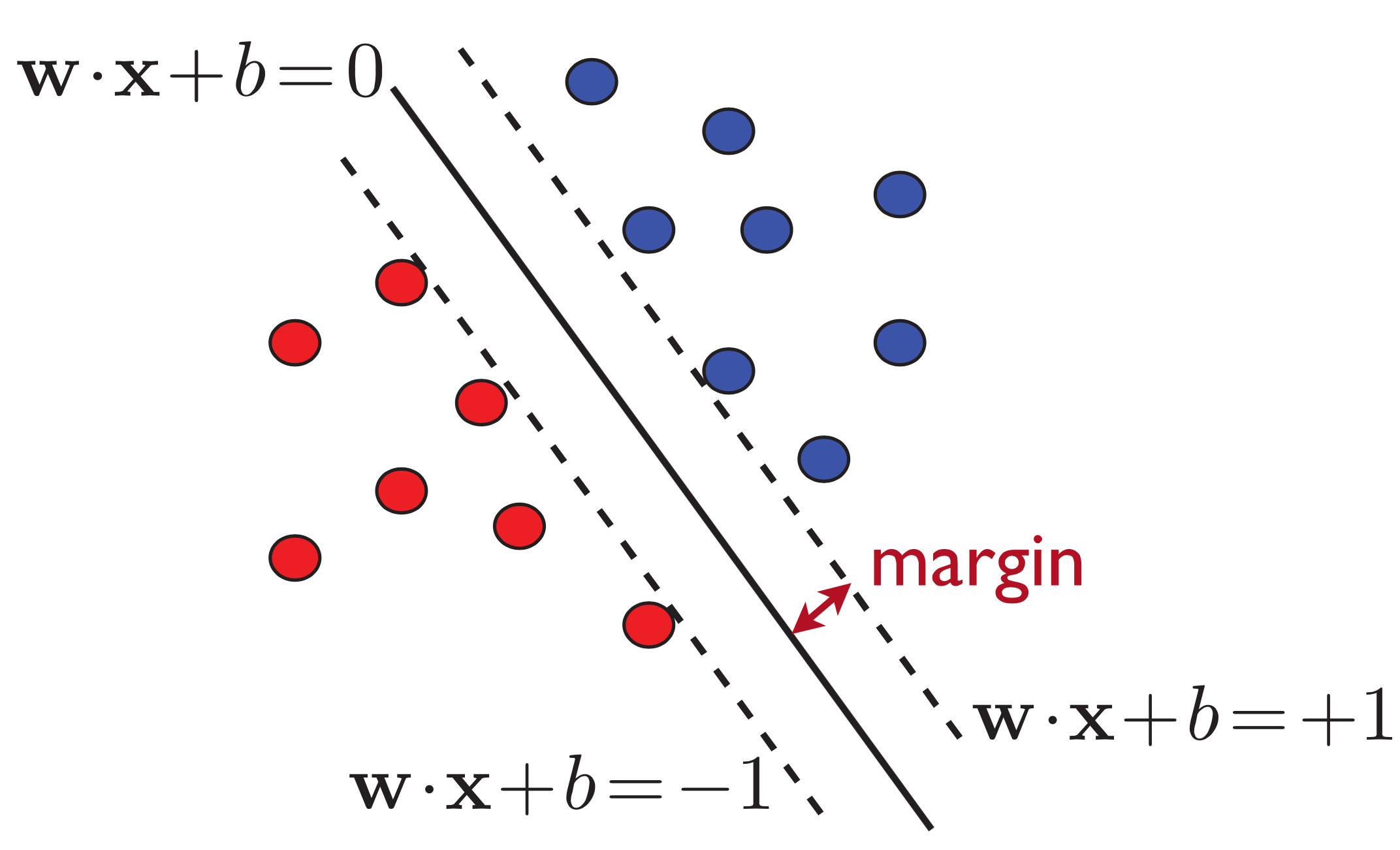

1.考虑在线性可分集上的超平面将两类点分开

设图中的超平面为\( w^T x + b = 0 \), 样本集到超平面的距离为:\( \rho = \textrm{min} \frac{y_i(w^T x_i + b)}{|w|} = \frac{a}{|w|}\) (即图中的margin为\(\frac{a}{|w|}\))

SVM的优化目标:最大化\(\frac{a}{|w|}\),约束为\( y_i(w^T x_i + b) \geq a\)

令\( \hat{w} = \frac{w}{a}, \hat{b}=\frac{b}{a}\), 则优化目标变为:最大化\(\frac{1}{|\hat{w}|}\),约束为\( y_i(\hat{w}^T x_i + \hat{b}) \geq 1\)

注意:将超平面由\( w^T x + b = 0 \)变换到\( \hat{w}^T x + \hat{b} = 0 \)并不改变分类结果,因为\( \textrm{sign}(w^T x + b) = \textrm{sign}(\hat{w}^T x + \hat{b})\)

不妨将优化目标记为:\(\textrm{min } \frac{1}{2}{|w|}^2, s.t. y_i(w^T x_i + b) \geq 1, \forall i\)

Lagrange乘数法与KKT条件

构造拉格朗日函数(\(\alpha_i\)非负):\(L(w,b,\alpha)=\frac{1}{2}|w|^2-\sum{\alpha_i[y_i(w^T x_i + b)-1]}\)

使得\(L\)取得极值的\(w,b,\alpha\)需满足:\(\frac{\partial L}{\partial w}=0;\frac{\partial L}{\partial b}=0;\forall i,\alpha_i[y_i(w^T x_i + b)-1]=0\). 即:

\[w=\sum{\alpha_i y_i x_i}\] \[\sum{\alpha_i y_i}=0\] \[\alpha_i=0 \textrm{ OR } y_i(w^T x_i + b)=1, \forall i\]

则

对偶问题

转化成求如下对偶问题: \[\textrm{max} Q(\alpha)=\sum{\alpha_i}-\frac{1}{2}\sum\sum{\alpha_i y_i \alpha_j y_j x_i^T x_j} \textrm{ s.t. } \sum{\alpha_i y_i}=0, \alpha_i \geq 0\]

注意,支持向量的\(\alpha_i \neq 0\),即满足\(y_i(w^T x_i + b)=1\)的点,求解该对偶问题可以得到\(\alpha_i\),则

\[w = \sum{\alpha_i y_i x_i}\] \[b = y_i - w^T x_i \textrm{ (Any } (x_i, y_i) \textrm{ which satisfies } \alpha_i \neq 0 \textrm{ will be alright.)}\]

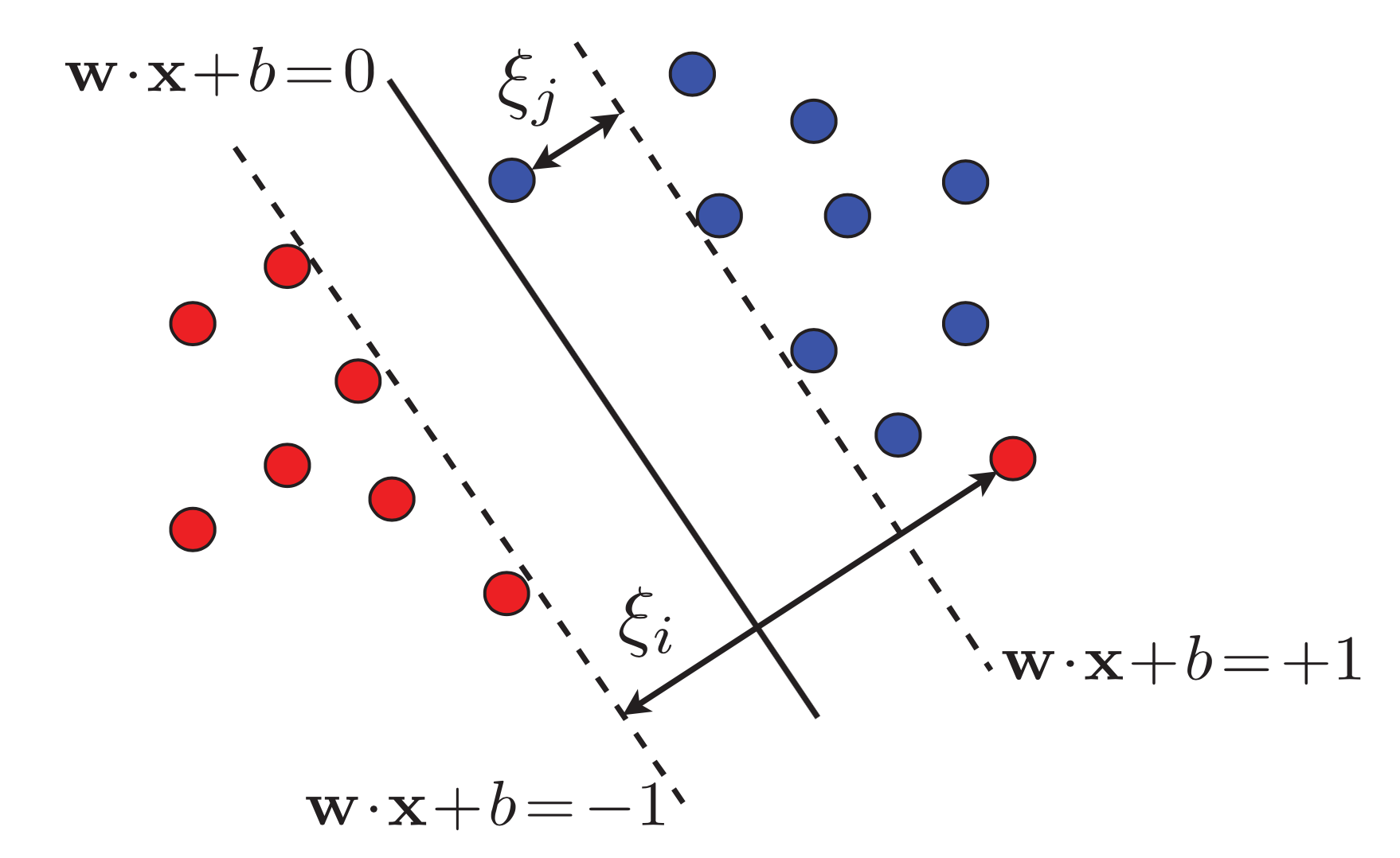

2.考虑在线性不可分集上的情况

\(\exists x_i\), 使得\(y_i(w^T x_i + b) < 1\), 此时的优化目标引入松弛变量\(\xi_i\).

\[\textrm{min }\frac{1}{2}|w|^2 + C\sum{\xi_i}\] \[ \textrm{ s.t. } y_i(w^T x_i + b) \geq 1 - \xi_i, \xi_i \geq 0, \forall i\]

Lagrange乘数法与KKT条件

构造拉格朗日函数(\(\alpha_i, \beta_i\)非负): \[L(w,b,\xi,\alpha,\beta)=\frac{1}{2}|w|^2 + C\sum{\xi_i} - \sum{\alpha_i[y_i(w^T x_i + b)-1+\xi_i]} - \sum{\beta_i \xi_i}\]

使得\(L\)取得极值的\(w,b,\xi,\alpha,\beta\)需满足:\(\frac{\partial L}{\partial w}=0;\frac{\partial L}{\partial b}=0;\forall i,\frac{\partial L}{\partial \xi_i}=0;\forall i,\alpha_i[y_i(w^T x_i + b)-1+\xi_i]=0;\)

\(\forall i, \beta_i \xi_i = 0\). 即:

\[w=\sum{\alpha_i y_i x_i}\] \[\sum{\alpha_i y_i}=0\] \[\alpha_i + \beta_i = C\] \[\alpha_i=0 \textrm{ OR } y_i(w^T x_i + b) = 1 - \xi_i, \forall i\] \[\beta_i=0 \textrm{ OR } \xi_i = 0, \forall i\]

则

对偶问题

转化成求如下对偶问题: \[\textrm{min } Q(\alpha)=\frac{1}{2}\sum\sum{\alpha_i y_i \alpha_j y_j x_i^T x_j} - \sum{\alpha_i} \] \[\textrm{ s.t. } \sum{\alpha_i y_i}=0, C \geq \alpha_i \geq 0, \forall i\]

求解该对偶问题可以得到\(\alpha_i\),则 \[w = \sum{\alpha_i y_i x_i}\] \[b = y_i - w^T x_i \textrm{ (Any } (x_i, y_i) \textrm{ which satisfies } 0 < \alpha_i < C \textrm{ will be alright.)}\]